A new white paper from Bader Law reveals deep flaws in TikTok’s AI content moderation systems. According to The Viral Injury Epidemic study, the majority of high-risk or harmful videos on the platform remain accessible long after users report them. The findings show that 60% of flagged dangerous TikTok videos are still online more than 48 hours after being reported, often gaining millions of views before removal.

The report raises concerns about how slowly automated moderation responds to fast-moving viral challenges — a delay that places minors at risk and exposes platforms to potential legal scrutiny.

Key Findings From the Study

The study identifies a series of significant moderation gaps:

- 60% of flagged harmful videos remain public after 48 hours.

- 27% continue gaining engagement even after TikTok reviews them.

- AI detection lags about 72 hours behind viral peaks, meaning harmful content typically trends before moderation reacts.

- The “Benadryl,” “Blackout,” and “Fire” challenges were among the most frequently re-uploaded after deletion.

- Only 11% of removed videos had age restrictions on subsequent uploads.

- “Repost loops,” where users download and re-share deleted videos, make harmful trends difficult to control once they gain traction.

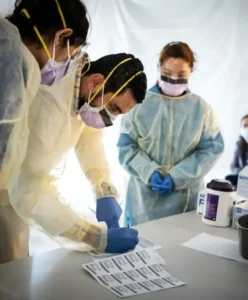

(Image placement suggestion #1: A generic “social media moderation” visual.)

Moderation Delays Across TikTok’s Most Dangerous Categories

The report found that harmful content across several categories stayed online for extended periods:

- Physical injury stunts remained visible for more than two days on average and were often re-uploaded.

- Substance-related challenges generated close to a million views before removal.

- Asphyxiation trends stayed online long enough to reach large audiences.

- Dangerous driving-related videos circulated widely before enforcement.

- Cosmetic and body-image challenge videos had the longest visibility time, frequently surpassing 72 hours.

Across all categories, harmful content was consistently seen and shared long before removal.

(Image placement suggestion #2: A neutral “viral trends” graphic.)

Why TikTok’s AI Struggles to Remove Harmful Content

The study outlines several weaknesses in AI-powered moderation:

1. AI prioritizes copyright and misinformation over physical harm.

Injury-related challenges often slip past early filters designed for other types of violations.

2. “Contextual harm” is difficult for algorithms to identify.

Videos framed as humor, dares, or stunts are harder for AI to classify as dangerous.

3. Takedowns occur in delayed waves.

Instead of immediate removal, TikTok often deletes flagged videos in batches — giving harmful content time to spread.

4. Human oversight is limited.

Manual moderation accounts for fewer than 10% of removals, leaving AI systems to make most decisions.

5. Delayed removals may carry legal consequences.

Legal analysts note that prolonged exposure could weaken platform protections in negligence or harm-related lawsuits.

Why These Failures Matter

The study’s authors warn that AI-driven moderation has become reactive rather than preventative, allowing high-risk content to accumulate millions of impressions before any action is taken.

Public health experts argue that improving algorithmic transparency, adding stricter age gating, and shortening removal cycles could prevent many avoidable injuries each year. Bader Law notes that the issue may become central to future legal debates, especially as policymakers discuss potential changes to Section 230 protections.

Also Read

- Discovering the Elegance of Wedding Gowns Online

- New Jersey DWI/DUI Defense Lawyer

- The Role of Legal Strategy in Protecting Your Business